But that doesn’t mean you shouldn’t try to be discovered…

The other day, someone dropped an ad they saw on social media in our work Slack for an “AI Visibility Monitoring” service. The pitch: track how your brand shows up across ChatGPT, Perplexity, Gemini, and Claude. Get monthly reports. Benchmark against competitors. Stay ahead of the curve.

My first reaction was — yeah, I’d love that. Then I thought about it for a minute.

The concept of AI Visibility reporting makes total sense. AI tools are shaping how people discover brands — we’ve been thinking about this shift for a while — and if you can’t see how you’re being represented, you’re flying blind. Semrush has an AI Visibility Toolkit, Profound offers enterprise-grade citation analytics, and Otterly and Peec AI are competing for the mid-market. Agencies are packaging it as a managed service. Adobe just announced plans to acquire Semrush for $1.9 billion, in part to incorporate tools that help brands appear inside AI-generated search results. There’s real money here.

There’s just a tiny problem: the underlying data is a pile of hot garbage.

AI Visibility Data is Hot Garbage

There are two schools of thought for understanding AI-mediated activity on your site, and both of them have big problems.

Approach 1: Server logs (lots of data, low relevance)

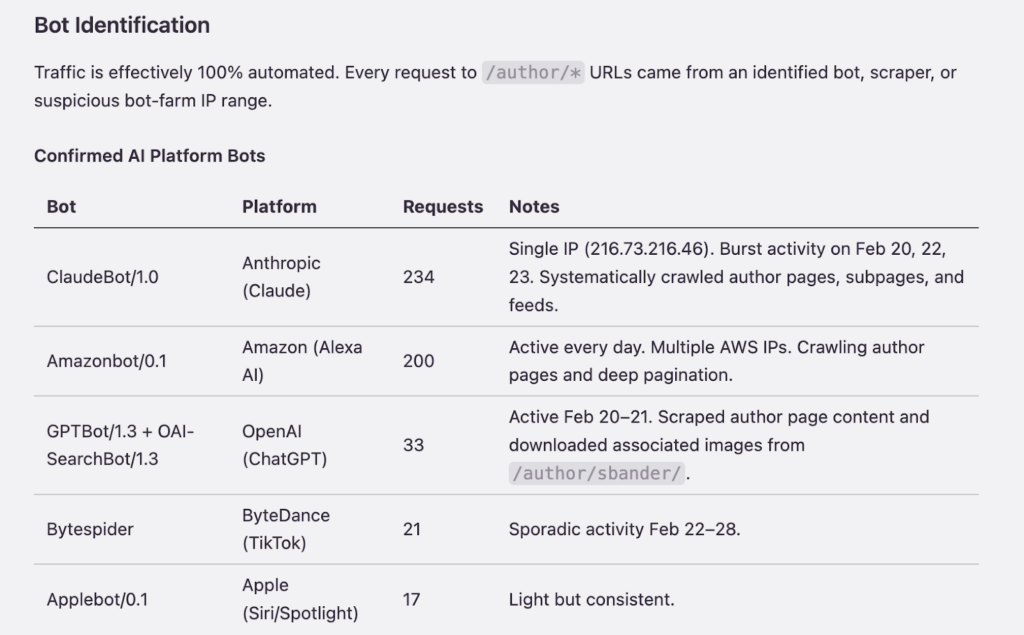

At one end, you have analyzing server logs — tracking how often AI bots crawl your pages. It’s the most expansive data available, but it has a huge problem with relevance.

An AirOps study analyzing over 548,000 retrieved pages across 15,000 prompts found that ChatGPT cited only about 15% of the pages it pulled in. The other 85%? Retrieved and discarded, never seen by the user, and often a “fluke” in the research process.

Cloudflare’s data shows that roughly 80% of all AI crawling is for model training, not for serving search results. Only about 18% of bot activity on your site has anything to do with answering a user’s question.

A bot visiting your site and a user seeing your content in a chat response are two very different things, and most bot activity doesn’t even originate from a chat session.

So if an AI visibility tool is tracking how often bots visit your pages and calling that “visibility,” the number it’s showing you is almost meaningless. A bot visiting your site and a user seeing your content in a chat response are two very different things, and most bot activity doesn’t even originate from a chat session.

Approach 2: Referer traffic (undercounts everything)

The other approach is referer-based tracking; i.e., analyzing traffic that arrives with a referer header from ChatGPT, Perplexity, etc. This is what most analytics setups can actually capture, and we’ve written about the problems before.

Even before AI, referer traffic had notorious undercounting issues. And now, just for fun, when someone clicks a link in a ChatGPT (or other consumer AI bot) response, the referer header passes through. Sometimes. Links in AI responses are frequently broken due to formatting issues from the training data. Users copy-paste URLs, type them manually, or come back days later through a Google search they wouldn’t have done without the AI conversation.

There might also be non-relevant semi-automated traffic in the mix. We don’t yet know how assisted browsing — things like Chrome’s AI features or ChatGPT’s Atlas browser — will show up in referer data. Is that “AI traffic”? Is it “direct”? Who knows.

In GA4, all of this shows up as a jumble of referral traffic, direct visits, and organic sessions with no clean way to connect them back to an AI interaction. The tools claiming to solve this attribution problem are working with the same broken signals everyone else has.

Consistency Problems, Oh My

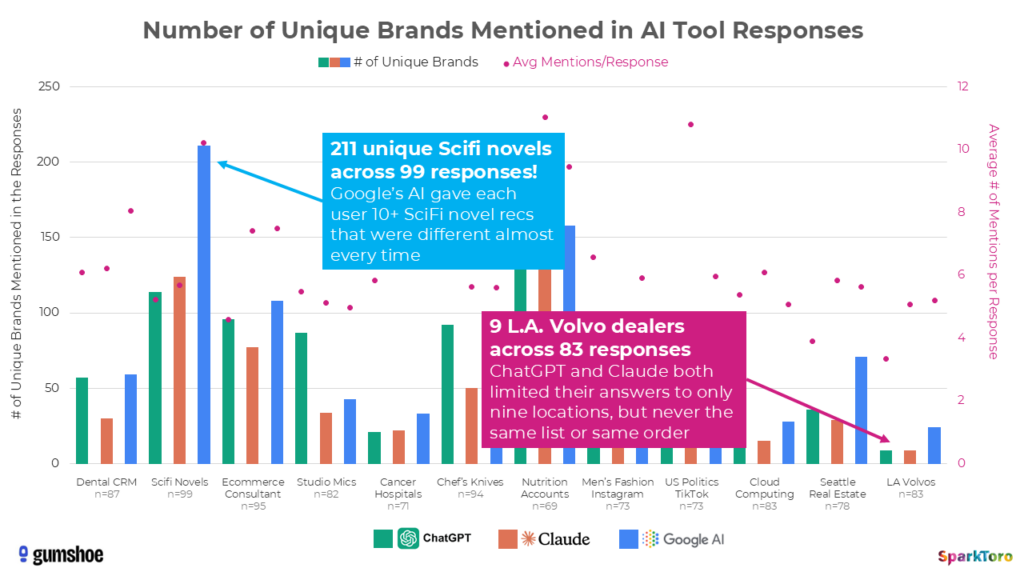

Even if you could solve the data collection issues, there’s a deeper problem with replicability. SparkToro’s research found that ChatGPT and Google AI Overviews return the same brand recommendation list less than 1% of the time when you run the same prompt repeatedly. The same list in the same order? Less than 0.1%.

Source: Sparktoro

Think about what that means for a “visibility score.” You’re measuring a target that moves every time you look at it. Run the same query tomorrow and you’ll get different brands, different citations, different results. Heck, run it again in five minutes and see what happens.

Not all hope is lost – it’s possible that some defined user journeys in a chatbot could actually lead to more repeatable results, but we don’t have a way to measure that yet. When we do, we’ll be talking about it!

We Tried It Ourselves

I promise you, we want AI visibility reporting to work.

Earlier this year, we built an “MVP” AI Visibility page into our internal monthly reporting — a Looker Studio dashboard filtered to ChatGPT, Perplexity, Gemini, and Claude referral traffic. What we got was about 13 referrer sessions per month and a handful of leads from AI tools.

The thing is, we know that number is wrong, because our leads told us. Our on-the-record leads who mentioned using AI tools to find us exceeds what GA4 shows by 2-3x. The actual AI-influenced activity on our site is higher than what any analytics tool is capturing. When we looked at our server logs, we found AI bots trying to hit pages of our site that don’t even exist, but are present on most WordPress blogs, probably as research “flukes” like I mentioned earlier.

Semrush’s AI Visibility report was less than helpful; the data quality was inconsistent, access was limited on our tier, and the metrics didn’t connect to anything actionable. After sitting down as a team to talk it through, the honest conclusion was: the reporting tools aren’t good enough. We could either surface limited, imperfect metrics from GA4, pay for a platform we don’t trust, or rethink the whole thing.

Rethinking the Whole Thing

If we were building an AI visibility service from scratch today, we’d probably skip the visibility dashboards. Not because measurement doesn’t matter — it does. But because the measurement isn’t reliable enough to make any business decisions based on it, and pretending otherwise is a form of willful ignorance. We’d focus on the things that are actually within your control and known to improve how AI tools discover and cite your content:

- Structured data

- Long-form, comprehensive answers to real questions

- In-depth product and service pages

- Content that builds topical authority

- The kinds of things that work across multiple channels — not just AI, but organic search, social, and direct discovery too

A lot of this comes down to fundamentals that Google has always rewarded — relevant content, good structure, topical authority. The advantage of this approach is that it has value regardless of whether AI tracking gets better.

And here’s the thing: we already know AI is driving real business. Remember those leads I mentioned (the ones who said they found us through AI tools)? That’s happening whether or not it shows on a dashboard. The inability to measure something doesn’t mean it isn’t happening. Investing in a high-quality site that meets users’ needs beats stressing over a dashboard that’s all noise and no signal.

The “Yet” Matters

The companies selling polished dashboards and monthly visibility scores are packaging confidence they haven’t earned yet.

AI Visibility reporting isn’t a “never.” It’s a “not yet.”

The concept is genuinely valuable – otherwise nobody would be chasing it unlike cryptocurrency. Knowing how AI tools represent your brand matters, and it’s going to matter more as these platforms take a bigger share of how people find and buy things. The tools will improve, referer standards will probably get better, citation tracking will mature, the data will catch up… someday.

But right now, in 2026, it’s too early. The companies selling polished dashboards and monthly visibility scores are packaging confidence they haven’t earned yet.

Build a site your users need today. When the measurement catches up — and it will — you’ll already be in the conversation.